In a recent post, we discussed optimizing a face detection system based on classical computer vision techniques to run on a GPU using OpenCV with CUDA enabled. As a result of the optimization the performance improved from 3~4hz to ~10hz. As a recap of the system setup used in this exercise, the Raspberry Pi camera took raw images and published the images to an image ROS topic. The Jetson TX1 subscribed to the raw image topic and handled the heavy lifting which included preprocessing the raw image and detecting any faces. The two machines were connected over WIFI.

We use a similar set up in this exercise allowing the Jetson TX1 to take care of object detection using the Yolo2 algorithm while the Raspberry Pi was solely responsible for streaming compressed raw RGB images. Once again I am amazed how ROS can help to integrate different languages and frameworks (C++11, Python, OpenCV, PyTorch) seamlessly.

source: ros_object_detection

YOLO: You Only Look Once

This youtube recording of a presentation given by the creators of YOLO, titled YOLO 9000: Better, Faster, Stronger suffices in introducing the algorithm. Further, techniques used to transfer learned weights from ImageNet for classification to a use in object detection is covered as well. The combination of the ImageNet and COCO data set using a word tree [28:00], and the discussion related to back propagating different errors based on which data set the input was derived from, was informative.

System Setup

The launch file loads the configuration details found in yolo2.yaml and stores

the path details on the parameter server. Next, the service

run_inference_yolo2, which is specified in the run_inference_yolo2.py in the

/scripts directory is initialized and launched. Finally, the object detection node is

initiated. The node will wait until the service is available before making a

request.

The system is quite simple as the hard work of object detection is abstracted to the service call.

Performance

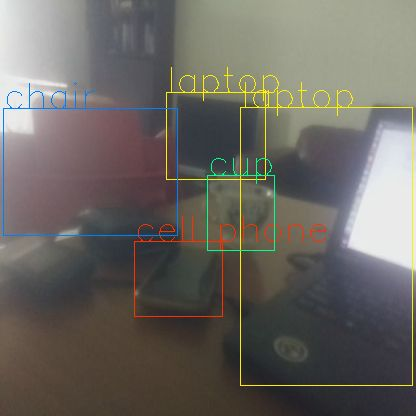

Face detection using classical computer vision techniques with CUDA enabled resulted in 10fps on the Jetson TX1. Object detection using Yolo2 obviously is a much more difficult task as this implementation will be detecting 80 different classes. The performance measured in publishing rates was between 3.3-3.8hz while over-clocking increased the performance to 5hz. Considering the number of classes being covered this is amazing, and understandably why there is much hope for deep learning technologies. Here is a snap shot of the results.

Summary

This set up, though not completely on the edge, uses a network set up and does not require cloud access. The concept of having an agent worker with constrained resources dependent on a mother machine is a concept that is worth exploring further.

References

- https://www.youtube.com/watch?v=GBu2jofRJtk